|

SE 616 – Introduction to Software Engineering |

|

Lecture 9 |

Project

Management (Chapter 21)

Management

Spectrum

The 4 P’s

- People — the most important element of a successful project

- Senior managers - define business issues

- Project (technical) managers - control practitioners

- Practitioners - design and build systems

- Customers - set software requirements

- End-users - interact with delivered software

- Product — the software to be built

- Context - how it fits into larger system

- Information Objectives - what data comes into and goes out of the software

- Function and Peformance

- Process — the set of framework activities and software engineering tasks to get the job done

- Software engineering paradigm used

- Project — all work required to make the product a reality

Stakeholders

- Senior managers who define the business issues that often have

significant influence on the project.

- Project (technical) managers who must plan, motivate,

organize, and control the practitioners who do software work.

- Practitioners who deliver the technical skills that are necessary

to engineer a product or application.

- Customers

who specify the requirements for the software to be engineered and other

stakeholders who have a peripheral interest in the outcome.

- End-users who interact with the software

once it is released for production use.

Software Projects

Factors that influence the end result ...

- size

- delivery deadline

- budgets and costs

- application domain

- technology to be implemented

- system constraints

- user requirements

- available resources

Project Management Concerns

- Product Quality

- Risk Assessment

- Measurement

- Cost Estimation

- Project Scheduling

- Customer Communication

- Staffing

- Other Resources

- Project Monitoring

Why Projects Fail

- an unrealistic deadline is established

- changing customer requirements

- an honest underestimate of effort

- predictable and/or unpredictable risks

- technical difficulties

- miscommunication among project staff

- failure in project management

Software Teams

The following factors must be considered when selecting a software project team structure ...

- the difficulty of the problem to be solved

- the size of the resultant program(s) in lines of code or function points

- the time that the team will stay together (team lifetime)

- the degree to which the problem can be modularized

- the required quality and reliability of the system to be built

- the rigidity of the delivery date

- the degree of sociability (communication) required for the project

Organizational Paradigms

- closed paradigm — structures a team along a traditional hierarchy of authority

- random paradigm — structures a team loosely and depends on individual initiative of the team members

- open paradigm — attempts to structure a team in a manner that achieves some of the controls associated with the closed paradigm but also much of the innovation that occurs when using the random paradigm

- synchronous paradigm — relies on the natural compartment-alization of a problem and organizes team members to work on pieces of the problem with little active communication among themselves

Avoid Team “Toxicity”

- A frenzied work atmosphere in

which team members waste energy and lose focus on the objectives of the

work to be performed.

- High frustration caused by

personal, business, or technological factors that cause

friction among team members.

- “Fragmented or poorly coordinated

procedures” or a poorly defined or improperly chosen process model that

becomes a roadblock to accomplishment.

- Unclear definition of roles

resulting in a lack of accountability and resultant finger-pointing.

- “Continuous and repeated exposure

to failure” that leads to a loss of confidence and a lowering of morale.

Agile Teams

- Team members must have trust in

one another.

- The distribution of skills must

be appropriate to the problem.

- Mavericks may have to be excluded

from the team, if team cohesiveness is to be maintained.

- Team is “self-organizing”

- An adaptive team structure

- Uses elements of Constantine’s

random, open, and synchronous paradigms

- Significant autonomy

Team Coordination & Communication

- Formal, impersonal approaches

include:

- software engineering documents

and work products (including source code),

- technical memos,

- project milestones,

- schedules,

- project control tools (Chapter

23),

- change requests and related

documentation,

- error tracking reports, and

repository data (see Chapter 26).

- Formal, interpersonal procedures

focus on quality assurance activities (Chapter 25) applied to software

engineering work products. These include status review meetings and design

and code inspections.

- Informal, interpersonal

procedures include group meetings for information dissemination and

problem solving and “collocation of requirements and development staff.”

- Electronic communication

encompasses electronic mail, electronic bulletin boards, and by extension,

video-based conferencing systems.

- Interpersonal networking includes

informal discussions with team members and those outside the project who may

have experience or insight that can assist team members.

Defining the Problem

The Product Scope

- Scope

- Context. How does the software

to be built fit into a larger system, product, or business context and

what constraints are imposed as a result of the context?

- Information objectives. What

customer-visible data objects (Chapter 8) are produced as output from the

software? What data objects are required for input?

- Function and performance. What function does the software perform

to transform input data into output? Are any special performance

characteristics to be addressed?

- Software project scope must be

unambiguous and understandable at the management and technical levels.

Problem

Decomposition

- Sometimes called partitioning or

problem elaboration

- Once scope is defined …

- It is decomposed into

constituent functions

- It is decomposed into

user-visible data objects

or

- It is decomposed into a set of

problem classes

- Decomposition process continues

until all functions or problem classes have been defined

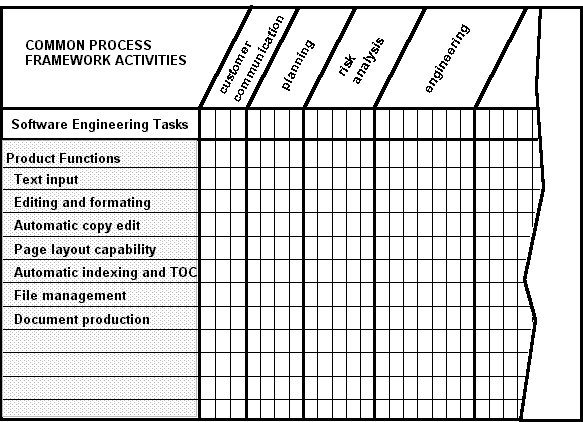

The

Process

- Once a process framework has been

established

o

Consider

project characteristics

o

Determine

the degree of rigor required

- Define a task set for each

software engineering activity

o

Task

set =

- Software engineering tasks

- Work products

- Quality assurance points

- Milestones

Melding Problem and Process

Typical Customer Communication Activity

The Project

- Projects get into trouble when …

- Software people don’t understand

their customer’s needs.

- The product scope is poorly

defined.

- Changes are managed poorly.

- The chosen technology changes.

- Business needs

change [or are

ill-defined].

- Deadlines are unrealistic.

- Users are resistant.

- Sponsorship is lost [or was never

properly obtained].

- The project team lacks people

with appropriate skills.

- Managers [and practitioners]

avoid best practices and lessons learned.

Common-Sense Approach to

Projects

- Start on the right foot.

This is accomplished by working hard (very hard) to understand the

problem that is to be solved and then setting realistic objectives and

expectations.

- Maintain momentum. The project manager must provide

incentives to keep turnover of personnel to an absolute minimum, the team

should emphasize quality in every task it performs, and senior management

should do everything possible to stay out of the team’s way.

- Track progress.

For a software project, progress is tracked as work products (e.g.,

models, source code, sets of test cases) are produced and approved (using

formal technical reviews) as part of a quality assurance activity.

- Make smart decisions. In essence, the decisions of the

project manager and the software team should be to “keep it simple.”

- Conduct a postmortem analysis.

Establish a consistent mechanism for extracting lessons learned for

each project.

Getting the Essence of a Project (W5HH Principle - B.

Boehm)

- Why is the system being developed?

- What will be done? By when?

- Who is responsible for a function?

- Where are they organizationally located?

- How will the job be done technically and managerially?

- How much of each resource (e.g., people, software, tools, database) will be needed?

Critical Practices

- Formal risk analysis

- Top 10 risks, probability of becoming a problem, impact

- Empirical cost and schedule estimation

- Current estimated size of program

- Metrics-based project management

- Earned value tracking

- Defect tracking against quality targets

- People aware project management

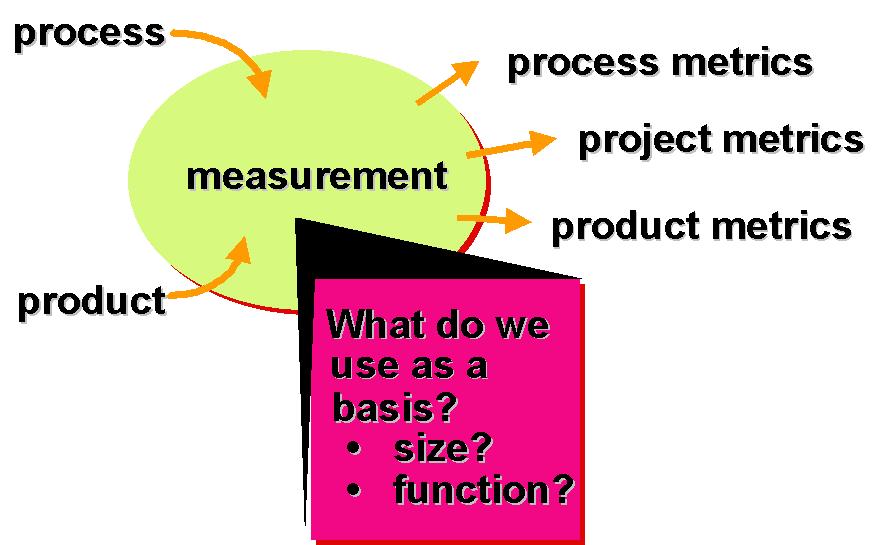

Process

and Project Metrics (Chapter 22)

Measurement & Metrics

... collecting metrics is too hard ...

... it's too time-consuming

... it's too political

... it won't prove anything

...

Anything that you need

to quantify can be measured

in some way that is superior

to not measuring it at all ...

What is a Metric?

A quantitative measure of the degree to which a system, component, or process possesses a given attribute.

Why do we Measure?

- assess the status of an ongoing

project

- track potential risks

- uncover problem areas before they

go “critical,”

- adjust work flow or tasks,

- evaluate the project team’s ability to control quality of software

work products.

A Good Manager Measures

Process Measurement

- We measure the efficacy of a

software process indirectly.

- That is, we derive a set of

metrics based on the outcomes that can be derived from the process.

- Outcomes include

- measures of errors uncovered

before release of the software

- defects delivered to and

reported by end-users

- work products delivered

(productivity)

- human effort expended

- calendar time expended

- schedule conformance

- other measures.

- We also derive process metrics by

measuring the characteristics of specific software engineering tasks.

Process Metrics Guidelines

- Use common sense and

organizational sensitivity when interpreting metrics data.

- Provide regular feedback to the

individuals and teams who collect measures and metrics.

- Don’t use metrics to appraise

individuals.

- Work with practitioners and teams

to set clear goals and metrics that will be used to achieve them.

- Never use metrics to threaten

individuals or teams.

- Metrics data that indicate a

problem area should not be considered “negative.” These data are merely an

indicator for process improvement.

- Don’t obsess on a single metric

to the exclusion of other important metrics.

Process Metrics

- majority focus on quality achieved as a consequence of a repeatable or managed process

- statistical SQA data: error categorization & analysis

- defect removal efficiency: propagation from phase to phase

- reuse data

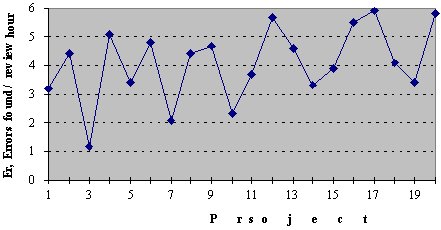

Project Metrics

- Effort / time per SE task

- Errors uncovered per review hour

- Scheduled vs. actual milestone dates

- Changes (number) and their characteristics

- Distribution of effort on SE tasks

Product Metrics

- focus on the quality of deliverables

- measures of analysis model

- complexity of the design

- internal algorithmic complexity

- architectural complexity

- data flow complexity

- code measures (e.g., Halstead)

- measures of process effectiveness

- e.g., defect removal efficiency

Typical Project

Metrics

- Effort/time per software

engineering task

- Errors uncovered per review hour

- Scheduled vs. actual milestone

dates

- Changes (number) and their

characteristics

- Distribution of effort on

software engineering tasks

Metrics Guidelines

- Use common sense and organizational sensitivity when interpreting metrics data.

- Provide regular feedback to the individuals and teams who have worked to collect measures and metrics.

- Don’t use metrics to appraise individuals.

- Work with practitioners and teams to set clear goals and metrics that will be used to achieve them.

- Never use metrics to threaten individuals or teams.

- Metrics data that indicate a problem area should not be considered “negative.” These data are merely an indicator for process improvement.

- Don’t obsess on a single metric to the exclusion of other important metrics.

Software

Measurement

Measurements

- Direct - Cost and effort applied

- LOC - lines of code

- execution speed

- defects reported over time period

- Indirect - functionality, quality, complexity, efficiency, reliability

Normalization for Metrics

- Normalized data are used to evaluate the process and the product

- Size-oriented normalization - line-of-code approach

- Function-oriented normalization - function point approach

Size-Oriented Metrics

- errors per KLOC (thousand lines of code)

- defects per KLOC

- $ per LOC

- page of documentation per KLOC

- errors per person-month

- LOC per person-month

- $ per page of documentation

Function-Oriented Metrics - Function Points

- errors per FP

- defects per FP

- $ per FP

- pages of documentation per FP

- FP per person-month

Why Use for FP Measures?

- independent of programming language

- uses readily countable characteristics of the "information domain" of the problem

- does not penalize inventive implementations that require fewer lines of code

- makes it easier to accomodate reuse and the trend toward object-oriented approaches

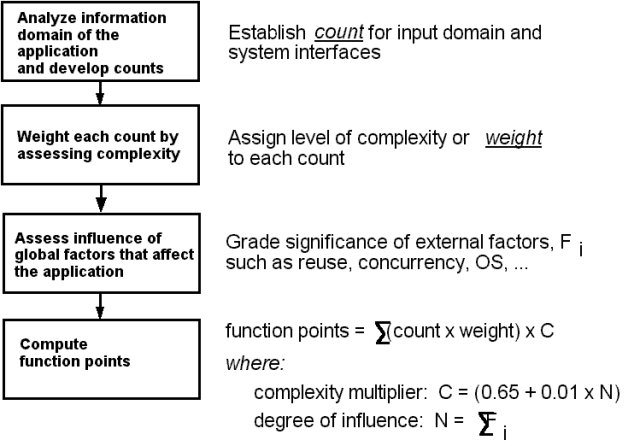

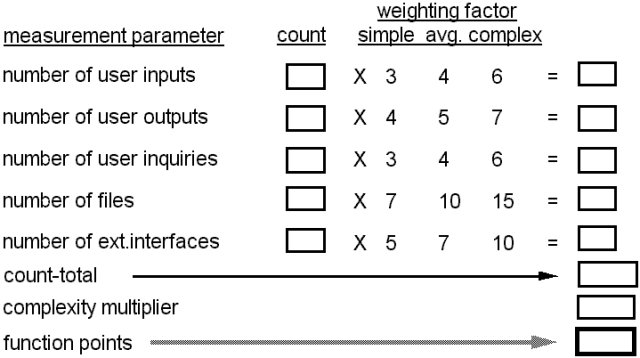

Computing Function Points

Sample Table for Calculating FP

- number of user inputs - input that provides distinct data

- number of user inputs - provides info to user (e.g. data items, screens, etc)

- number of user inputs - online input that creates an intermediate response

- number of files - each logical master file

- number of external interfaces - machine readable interfaces

Taking Complexity into Account

(Factors are rated on a scale from 0 (Not Important) to 5 (Very Important)

|

data communications |

on-line update |

|

distributed functions |

complex processing |

|

heavy usage configuration |

installationease |

|

transaction Rate |

operational ease |

|

on-line data entry |

multiple sites |

|

end user efficiency |

facilitates change |

Measuring Software Quality

- Correctness — the degree to which a program operates according to specification

- Maintainability — the degree to which a program is amenable to change

- Integrity — the degree to which a program is impervious to outside attack

- Usability — the degree to which a program is easy to use

Defect Removal Efficiency

DRE

= (errors) / (errors + defects)

where

errors = problems found before release

defects

= problems found after release

Ideal

DRE = 1

Metrics for Small Organizations

- time (hours or days) elapsed from the

time a request is made until evaluation is complete, tqueue.

- effort (person-hours) to perform the

evaluation, Weval.

- time (hours or days) elapsed from

completion of evaluation to assignment of change order to personnel, teval.

- effort (person-hours) required to make

the change, Wchange.

- time required (hours or days) to make

the change, tchange.

- errors uncovered during work to make

change, Echange.

- defects uncovered after change is

released to the customer base, Dchange.

Establishing a

Metrics Program

- Identify your business goals.

- Identify what you want to know or

learn.

- Identify your subgoals.

- Identify the entities and

attributes related to your subgoals.

- Formalize your measurement goals.

- Identify quantifiable questions

and the related indicators that you will use to help you achieve your

measurement goals.

- Identify the data elements that

you will collect to construct the indicators that help answer your

questions.

- Define the measures to be used,

and make these definitions operational.

- Identify the actions that you

will take to implement the measures.

- Prepare a plan for implementing

the measures.

Managing Variation - Statistical Process Control

The mR (moving range) Control Chart